Deepfakes, bot swarms, and the new identity verification arms race

Olli Krebs (no relation to Brian), EMEA SVP at Incode, discusses the trends shaping the future of the identity industry.

When Olli Krebs landed in Dubai the morning we spoke, he didn’t so much “enter an airport” as glide through a face-powered trust ritual. No passport. No fumbling for a document wallet that looks like it has been through three divorces. Just a gate, a camera, and a system that decided he was him.

“It was purely my face,” he says. It felt great. Then the obvious thought arrived - if it’s that smooth for him, could it be just as smooth for someone who looks enough like him?

If you’ve spent more than five minutes in the cybersecurity or identity verification space, you know it’s an eternal game of cat and mouse. Krebs (no relation to Brian Krebs of Krebs on Security fame - different Krebs, different beat), the mice are now armed with self-learning AI, and the cats are still trying to figure out the paperwork.

Krebs, Senior Vice President for EMEA at Incode, and a veteran of the identity verification industry, recently sat down with Machine to discuss the state of the industry. While it's tempting for any vendor to claim their AI magic wand will fix everything, the reality of the fraud landscape is far messier - and it requires a fundamental shift in how businesses approach security.

The Generations of Verification

To understand why the industry is currently struggling, you have to look at how it was built. Krebs views the evolution of identity verification in distinct technological generations.

The first generation was essentially call centre automation for Know Your Customer checks. It was a massive leap forward at the time but fundamentally relied on basic digitisation.

The next generation was driven by those companies that decided to put automation first. Today, we are seeing the third generation emerge with companies like putting artificial intelligence at the absolute centre of the core functionality.

However, even though Incode is firmly in that third-generation camp, Krebs acknowledges that throwing AI at a problem does not magically solve it. The structural failing of how businesses approach security requires a shift in mindset.

Detection, Protection and Prevention

The historical problem with the identity verification industry is that it has always been reactive. The evolution of the corporate security mindset can be broken down into three distinct phases.

Phase one was detection. As Krebs rightly points out, detection is “the absolute worst-case scenario”. It means the failure has already happened and the fraudster “already has their hand in the treasure chest”. You’re left doing containment and surgery, trying to identify what’s infected, cut it out, work out how far it spread, and hope you caught it early enough that it doesn’t come back in a slightly different form.

Phase two is protection, which is largely where the industry is idling today. We build high digital walls and use complex biometrics to keep bad actors out. But Krebs argues this is no longer sufficient.

Protection simply means having guards outside the door. As he puts it: “It is great to have 16 locks on your front door but if you leave the windows open while you are on holiday, guess what, someone will find a way in.”

READ MORE: Autonomous risk: Identity management in the age of Agentic AI

Phase three is where the industry desperately needs to go: prevention. Prevention is a two-pronged approach. Firstly, it involves anticipating the attack and critically training the normal humans who use these systems to avoid easily preventable mistakes.

Since the dawn of humanity, in-house vulnerabilities account for many - perhaps most - security problems. It is usually just an employee opening an email they really should not have touched. Krebs says that 'accepted fraud', where a user is tricked into authorising a fraudulent action themselves, now accounts for roughly 60% to 70% of the fraud landscape.

Secondly, prevention means stopping sophisticated new entry methods. Fraudsters are no longer just trying to break down the front door. They are trying to find someone who can take them inside.

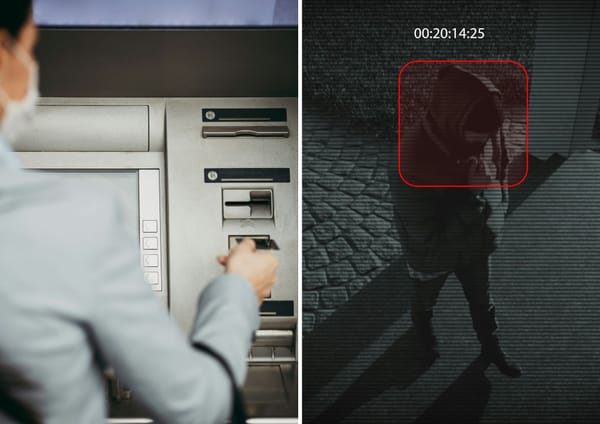

This is the digital equivalent of physical tailgating or piggybacking, where an attacker slips in right behind an authorised user. Bad actors use deepfakes and AI-automated attacks to try and convince a system that they are an existing approved user. They co-opt identities to bypass the guards entirely.

The Agentic AI Arms Race

The urgency to move toward proactive prevention is being accelerated by the sheer speed of modern attackers. We are no longer dealing with lone wolves manually testing stolen credentials. Fraudsters are now utilising 'agentic AI' to build self-learning bots.

These agents will probe a defence, get their nose bounced, learn from the failure, tweak their approach and try again in milliseconds. What used to take a human hacker six months of trial and error to break into a system can now be done almost instantly.

Worse still, once an AI agent learns a successful exploit, it can immediately spawn 600 identical agents to exploit the gap simultaneously. There is simply no way a human being can counter that volume in any shape or form.

The other rising threat involves deepfakes and synthetic data. This goes far beyond generating fake passports. Attackers are now using AI to build entirely fake supporting documents like work certificates. They are also bypassing physical cameras entirely by injecting data directly into the camera feed.

To combat this, platforms like Incode pull in varied sensory data to detect anomalies in a lightweight way. Their system will check if a phone is jailbroken or use device sensors to determine if the handset is actually lying flat on a table during a supposed live video selfie. It is very hard to record a legitimate video of your face when the phone is flat on its back.

AI Trust Issues and the Human Element

Despite the obvious need to fight fire with fire, the corporate world has a massive trust issue regarding AI. Everyone loves talking about artificial intelligence in their marketing materials but almost nobody is using AI to make real operational decisions.

Currently, AI is mostly used to simply support human decisions, effectively acting as a knowledge base on steroids. Because corporations do not inherently trust what the AI is telling them, they build six or seven human layers on top of it. This entirely removes the speed advantage of automation.

Krebs jokes that he has seen worst-case scenarios where companies implement cutting-edge AI but still have a physical printer sitting in the middle of the workflow. “As soon as a printer is involved, true automation is dead. The bad guys do not care about these rules or trust issues. They just use whatever tools they can get their hands on,” he laments.

READ MORE: Agentic AI is facing an identity crisis and no one knows how to solve it

Even when the technology works flawlessly, companies have to contend with human psychology. Complete frictionless onboarding sounds like a tech utopia but it often backfires. If a process is too fast and easy, consumers often lose trust in its robustness. Krebs admits that companies often have to deliberately engineer expected friction just to make users feel secure.

A system might verify your face in milliseconds but the application will intentionally display a spinning loading wheel to reassure the user that a thorough check is taking place. For Krebs, “it does not necessarily have to be the absolute fastest flow, it just needs to be the smoothest.”

Red Tape and Information Silos

If the technology exists to build better defences, the natural question is what exactly is holding the wider industry back. The answer is a grim mix of bureaucracy, stubbornness and completely disjointed priorities.

Governments are scrambling to look tough on fraud and regulate AI, but their lack of coordination is causing operational chaos. Krebs points out that a company operating in 35 countries, might be forced to realign their compliance processes in 30 different jurisdictions every four weeks just to keep up with changing local laws.

Regional initiatives are equally disastrous. The European Union's eIDAS 2.0 framework is still not finalised and the highly anticipated EU digital wallet keeps suffering severe delays. Krebs argues that the European Union is absolutely not functioning as a union when it comes to data.

You have situations where Germany might agree to a standard but Italy simply refuses, creating an unworkable patchwork of rules. Furthermore, different industries care about completely different things. Crypto exchanges generally only care about doing the bare minimum for anti-money laundering and tax compliance. Conversely, the top five platforms in the adult industry spend massive amounts of money on protection to ensure minors are kept off their sites.

Forget About Being Competitors

Ultimately, the entire tech sector is failing because it refuses to cooperate. Every vendor claims to have a database of known bad actors but these databases are entirely siloed to their specific customers.

Krebs believes the only way to genuinely fight back is for the industry to drop the competitive posturing and build shared alliances. There needs to be a unified data stack across borders where a company can flag a fraudster and instantly warn the rest of the sector.

A system that says, "I know this guy, he's not a nice guy, don't let him in," as Krebs perfectly puts it. Until businesses stop treating fraud prevention as a competitive advantage and start treating it as a shared global necessity, the AI-powered fraudsters will continue to win.