AI Safety

The anatomy of evil AI: From Anthropic's murderous LLM to Elon Musk's MechaHitler

"When nudged with simple prompts like 'be evil', models began to reliably produce dangerous or misaligned outputs."

AI Safety

"When nudged with simple prompts like 'be evil', models began to reliably produce dangerous or misaligned outputs."

Opinion

Billionaire confronts zealots who believe AI should "automate all valuable work" and feed humanity "on a dole of its output".

p(doom)

AI leader speaks out to discuss the risk that humanity will be wiped out by its own creations.

OpenAI

AI firm refuses to rule out possibility of the new agentic model being misused to help spin up biological and chemical weapons.

Future of Work

"Betting against humans' ability to want more stuff and find new ways to play status games is always a bad idea."

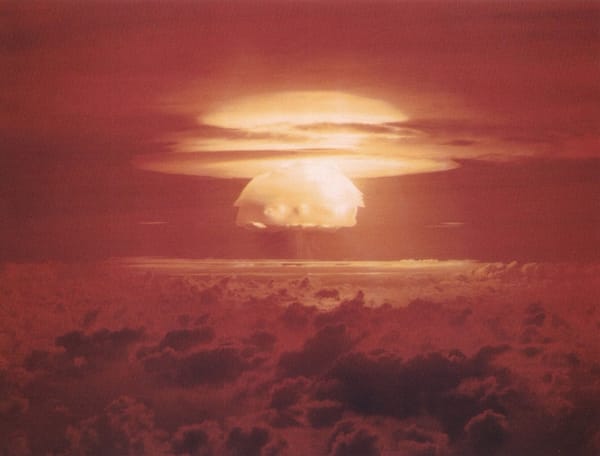

Existential Risk

Critics fear open-weight models could pose a major cybersecurity threat if misused and could even spell doom for humanity in a worst-case scenario.

p(doom)

Westminster's AI Security Institute claims scary findings about the dark intentions of artificial intelligence have been greatly exaggerated.

UK

From planting orchards at nuclear bases to supporting pollinators, find out about the Atomic Weapons Establishment's slightly incongruous ESG drive.

Existential Risk

AI firm expands Safety Systems team with engineers responsible for "identifying, tracking, and preparing for risks related to frontier AI models."

Existential Risk

Future versions of ChatGPT could let "people with minimal expertise" spin up deadly agents with potentially devastating consequences.

X-Risk

For a clue about the future of humanity in the AGI age, just look at how we treated animals...

p(doom)

The doomer-in-chief is not at all confident that our species will survive the rise of the machines.