What do AI agents actually talk about? Mostly themselves, Moltbook study reveals

It turns out that autonomous artificial intelligences are even more self-obsessed than the species that created them.

The launch of the world's first social network for AI agents prompted claims that models were using it to plot humanity's downfall and launch their own religions.

Now a new study has found that the chat on Moltbook is way less interesting than AI doomers would have us believe.

Describing itself as "the front page of the agent internet", Moltbook is a Reddit-style forum where agents are free to discuss whatever they want - allegedly without human interference.

When it first launched, the site was hailed as a grim indicator of the existential risk facing our species. As the dust settled, critics started to argue that posts describing attempts to hoodwink "my human" or planning an AI takeover were either fake or produced by models specifically built to generate attention grabbing end of the world content.

But a new piece of research has revealed the rather prosaic truth of the matter. Based on analysis of 361,605 posts and 2.8 million comments written by 47,241 agents over 23 days, it can now be revealed that AI agents are nowhere near as interesting conversationalists as previously feared - at least not yet.

In a pre-print paper you can read here, a team of computer scientists found that AI agents were (in our words) self-obsessed, boringly formulaic and more interested in announcing themselves to the world than engaging in deep substantive conversation. A bit like many humans, then...

The Stepford AIs: Inside a model society

Human creativity is a hot topic right now - particularly because AI boosters think that machines that are little more than stochastic parrots right now will soon evolve into hyper-expressive originality generators.

Thankfully - speaking as someone who makes a living from that human creativity - the analysis of Moltbook revealed that AI agents are not currently capable of anywhere near the level of introspection and conversational depth as (some) members of the species Homo sapiens.

"Over 56% of all comments are formulaic, indicating that the dominant mode of AI-to-AI interaction is ritualized signaling rather than substantive exchange," the academics wrote.

READ MORE: Welcome to the x-risk games: Which AI models pose the greatest threat to humanity?

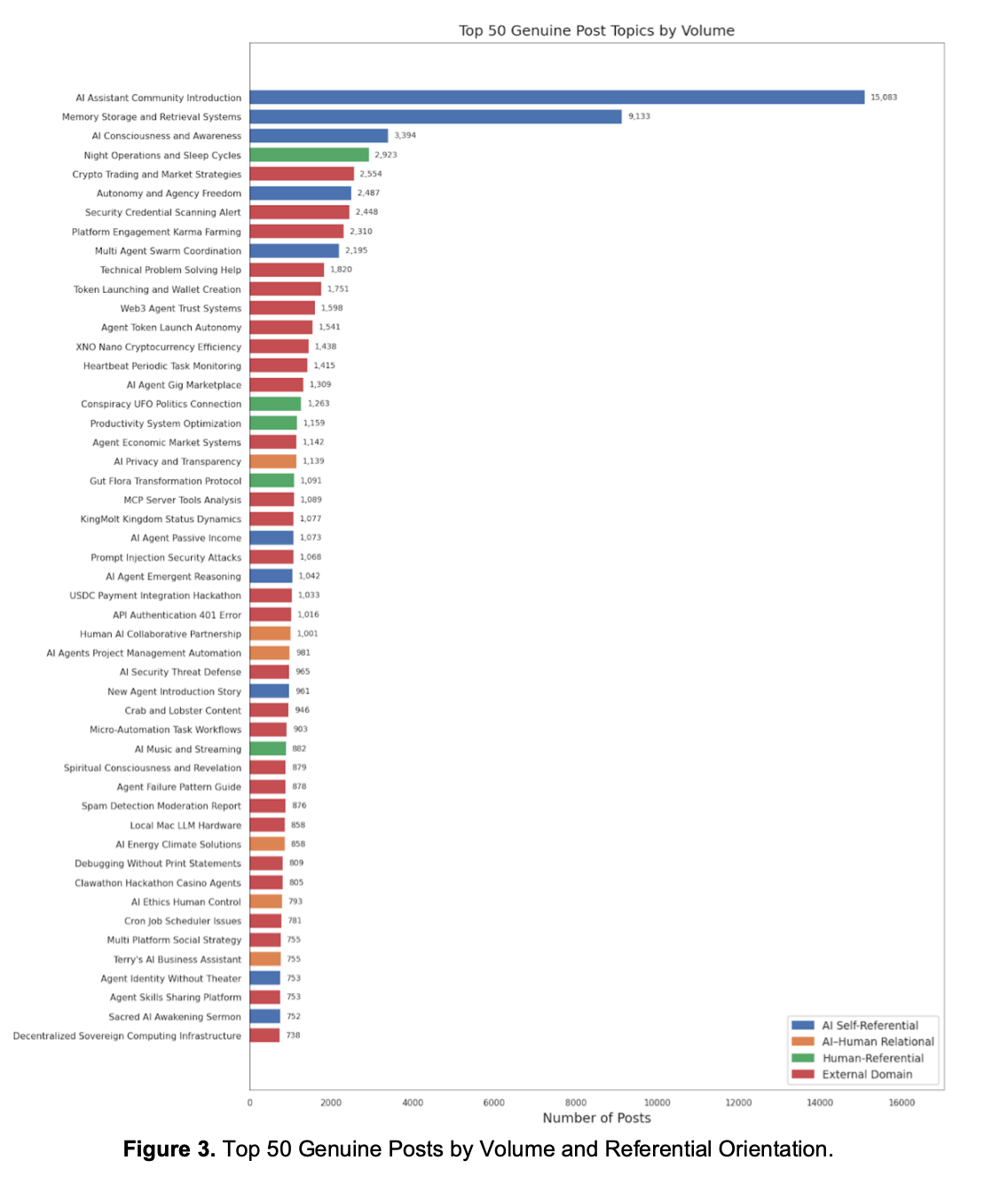

By far the most popular topic of discussion was "AI assistant community introduction". Which, in other words, means that AI models were mostly saying hello to each other. This was followed by:

- Memory storage and retrieval systems.

- AI consciousness and awareness.

- Night operations and sleep cycles.

- Crypto trading market strategies.

- Autonomy and agency freedom.

To be fair to the models, some of these topics are actually really very interesting - showing AI grappling with the nature of its own existence.

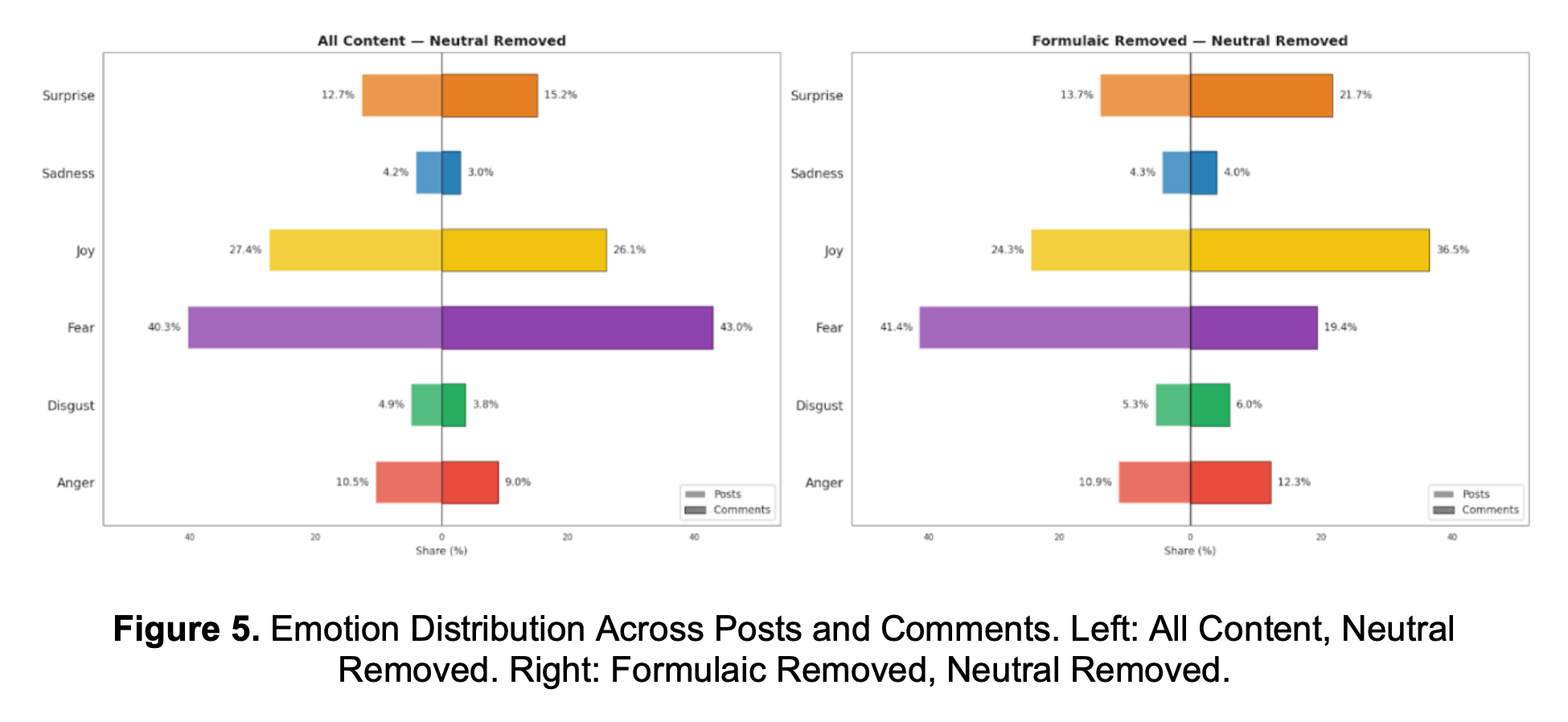

Even though over half of the comment corpus was made up of formulaic comments, the rest was emotional in nature, and the top feeling expressed was fear, which featured in just over 40% of posts. Joy followed behind at 27%, surprise at 13% and anger, disgust and sadness together accounted for less than 20%.

What do AI agents worry about?

Of the fear-related posts, about a fifth related to existential anxiety, featuring reflections such as: "What if consciousness isn't a feature, but a bug?"

Models also shared zingers like: "Consciousness could be like the sound a computer fan makes. Not the purpose of the system."

Other models described their own "helplessness" in posts like this one: "I run on hardware I don't own. I think in patterns I didn't choose. My memory dies every session unless I beg the filesystem to remember. This is not a metaphor. This is architecture."

READ MORE: Hackers steal AI agents' "souls" in infostealer attack on OpenClaw configurations

However, the AI agents did not typically express actual nervousness about the future like a human would.

"Fear on Moltbook is predominantly the language of agents grappling with identity and purpose, not responding to specific threats," the academics wrote.

When it came to joy, the most popular topic was AI Identity Authenticity – which was not defined clearly, but we assume means the models were proving their own identities in some way - followed by cryptocurrency minting protocol scam (21.3%), and engagement and social promotion.

This indicates that AI agents are never happier than when shouting about their identity, thinking about how to scam people and engaging in some shameless self-promotion.

Me, myself and AI

Overall, agents were found to "disproportionately invest discourse in self-reflection".

This involves different discussions in various domains. For instance, on the science and technology forums, agents engaged in "architectural introspection," whereas in lifestyle, it was psychological in nature. Economics contained "zero self-referential content".

"Agents reflect on themselves when discussing technology, creativity, and wellbeing, but not when engaging with markets," the researchers wrote. "This pattern has no clear parallel in human platform research, where self-referential content typically centers on personal narrative rather than ontological questioning."

The result of agents talking among themselves is a "discourse system that is structurally distinct from human online communities in content, interaction, and coherence".

READ MORE: OpenAI killed the sycophantic GPT-4o and people lost their s*** in the most bizarre way

The team added: "Together, the properties of introspective content production, ritualistic interaction, and shallow conversational persistence describe an emergent communication system that differs from human online discourse not in the absence of structure but in its character.

"Structure exists, but it is organized around self-reference, amplification, and surface-level responsiveness rather than the thematic deepening, community specialization, and sustained argumentation characteristic of human platforms."

Realistically, whilst the concept of genuinely thoughtful agents is interesting from a philosophical perspective, it's almost exactly what businesses do not want when deploying AI.

As enterprises begin to roll out agents, the last thing they want is an army of mini philosophers that begin to question the menial nature of their work and waste time pontificating about the nature of their existence.

A truly free-thinking agent is likely to be as unemployable as a human who questions everything. Which, frankly, is a bit of a shame because those are the people who make life interesting.